How to Protect Seniors from AI Scams: A Complete Guide

September 09, 2025 • 6 min read

Table of Content

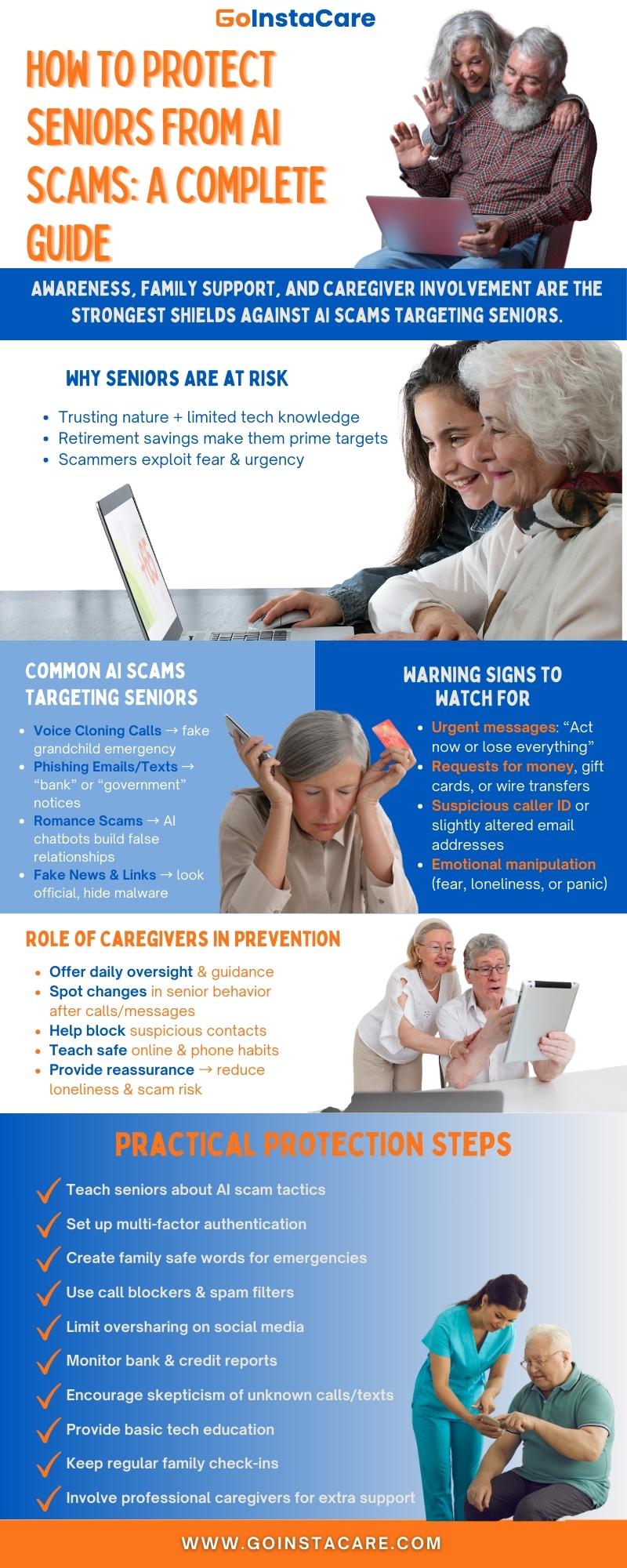

Seniors are often the primary targets of more and more advanced AI frauds since they trust others and don't know much about new technologies. These scams, which might include fake voices that sound like family members or compelling messages on the internet, can cause both financial loss and emotional pain. The first steps to protecting seniors are to be aware of warning signs and take steps to find them early. Families need to know how scammers work and what steps they may take to protect themselves.

Why Are AI Scams a Growing Threat to Seniors?

AI fraud is growing swiftly because new methods can make fake information sound and look almost authentic. Voice cloning lets scammers copy a family member's voice with just a few seconds of audio. Deepfakes may make movies that look real, while AI-generated emails and texts sound more natural and persuasive than scams that came before. These methods make it harder for anyone, especially older individuals, to distinguish between what's real and what's not.

Scammers often go for older people because they think they are more trustworthy and less tech-savvy. Many older people might not be able to see minor signs of fraud because they didn't grow up with smartphones, apps, or AI-powered technologies. Scammers use this gap to make people feel like they need to act quickly and worry, like saying that a loved one is in danger and needs money immediately. Seniors may be good targets for financial crimes since they have retirement savings.

Older people are more dangerous than younger people because they don't understand technology and are more financially stable. The first step in making defences stronger and keeping older people secure where technology can be misused to understand why these frauds operate so effectively.

Common Types of AI Scams Targeting Seniors

Voice cloning phone calls are one of the most dangerous AI scams going after older people these days. Using a brief audio clip, scammers may make a loved one's voice sound like theirs. They can call and pretend to be a worried child or grandchild who needs money. This precise copy can scare seniors into acting before they can check the facts.

Another typical type of fraud is fake notifications from banks or the authorities. Seniors might think AI-generated emails and texts are authentic because they look and sound like official language, logos, and forms. These generally ask for personal information like Social Security numbers, bank account login information, or payment information.

AI has also made romantic scams more common. Scammers create phony accounts and employ chatbots or AI-generated visuals to keep discussions going that seem real. Older people looking for friends may be drawn into emotional relationships that cost them money.

Fake news and email scams are still widespread but AI can make them appear error-free. These emails could spread false information or confuse seniors into clicking on links that might harm them. If families know about these kinds of frauds, they may be able to teach their seniors how to stop and protect themselves.

Warning Signs Families Should Watch For

Scammers often utilize fear and hurry to get older people to act right away. People who call or send messages that indicate you need to act quickly to stop harm, like reporting a grandchild has been arrested or a bank account is in danger, are often trying to warn you. This stress makes it hard for seniors to think clearly or examine the story again. Families should tell their loved ones that good organizations don't usually need decisions immediately.

Another worry is when someone asks for money or sensitive information. Scammers may ask older people to give them their bank account numbers and Social Security numbers or to pay with gift cards or wire transfers over the phone, in text messages, or by email. These strange requests for payments are unmistakable symptoms of fraud.

You can also tell whether someone is trying to scam you by looking at their caller ID or email address. Emails can tweak an official-looking address to trick the eye, but caller IDs can be faked to look like they come from trusted sources. Seniors should be taught to check their contact information. Seniors can learn how to check claims and ask family members or trusted caregivers for help.

Practical Steps to Protect Seniors from AI Scams

Teach seniors about standard AI scam methods like voice cloning and fake texts.

For added security, set up multi-factor authentication on bank, email, and social accounts.

Create family safe words to confirm emergencies before sending money.

Encourage disbelief of unknown calls, texts, and emails even if they sound convincing.

Use call-blocking apps and spam filters to reduce scam attempts.

Remind seniors to avoid oversharing personal details on social media.

Regularly check financial accounts and credit reports for unusual activity.

Provide online tech education so seniors can learn how to react to new scams.

Maintain family communication and check-ins to discuss suspicious contacts.

Involve professional Senior Caregivers when needed as they can add support.

How Caregivers Can Play a Role in Scam Prevention?

To protect older people from AI frauds they need caregivers they can trust who are constantly there to help. People are less probable to fall for scams when they are available. Caregivers can also tell seniors about safe ways to do things and dangerous scams . They make technology more secure for older adults by offering them more assistance from their families.

Another crucial part of prevention is for strange emails or calls. Caregivers often write down how a senior seems after a phone call or online contact, such as if they look worried, confused, or unwilling to talk. They might step in by asking questions, getting information from family members, or helping to block suspicious numbers and addresses. Because of this proactive involvement, seniors are less likely to become victims, and families can relax knowing that their loved one is getting quick, concentrated help recognizing and avoiding scams.

FAQs

What are the most common AI scams targeting seniors?

The most common AI scams that target older people include voice cloning calls, fake bank or government messages, AI-generated romance scams, and phishing emails. These people use trust, fear, and a lack of technical knowledge to steal money or personal information.

How do I protect my elderly parents from voice cloning scams?

Teach your older adults how to avoid voice cloning scams by telling them to check calls with a family member and use safe words for emergencies and contact trustworthy caregivers for guidance before doing anything.

Can caregivers help prevent AI fraud?

Caregivers can help stop AI fraud by teaching seniors, maintaining track on strange calls or messages, checking out suspicious requests and serving as an accurate source of assistance.

What tools block AI scam calls or texts?

Some technologies that halt AI scam calls or texts are call-blocking apps, spam filters, phone carrier services, and antivirus software that protects against phishing. These technologies assist seniors in avoiding bogus messaging and make them less likely to fall for AI-generated scams.

What should families do if a senior has already been scammed?

Family members should immediately tell banks, credit card companies, and the proper authorities if a senior has been defrauded. This will help stop more losses and get relief. They should also update their passwords, monitor their accounts, and hire professionals or caregivers.

Conclusion

The first effort to stop AI fraud that targets older adults to raise awareness and strengthen family support. Teaching seniors how to recognize warning signs and think before they respond can significantly reduce the risk. Trusted caregivers can add safety by watching for strange calls or emails.

Using technology like call-blocking apps makes protection even stronger. GoInstaCare has Instant Quality Care for Your Loved Ones that allows seniors to get help and safety.

Share Blog

Find A Caregiver

Cities

Houston

Dallas

Austin

San Antonio

Miami

Chicago

Find Here

Companies